Accelerating AI impact: A practical path from intent to execution

Federal mission leaders no longer need convincing that AI matters. The harder question is how to move from interest to sustained, defensible impact—without increasing risk, eroding trust, or stalling in pilots.

Recent federal actions signal clear support for responsible AI adoption.

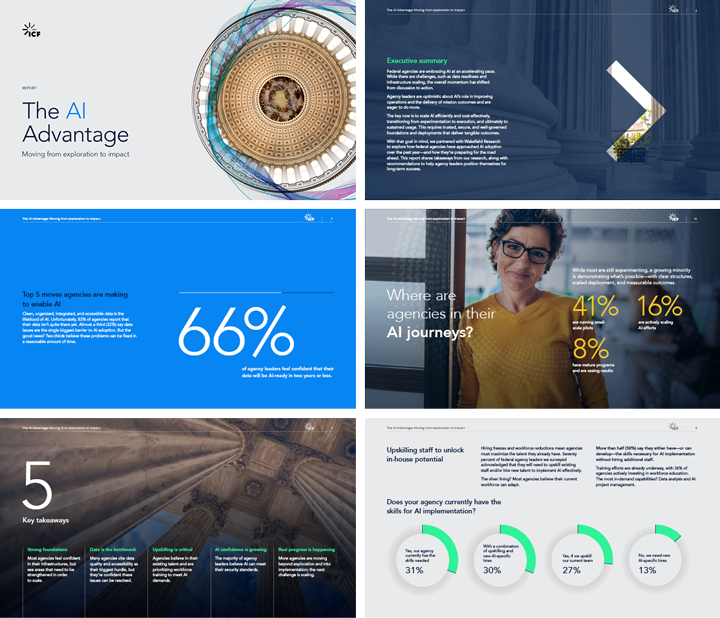

But survey findings from ICF’s research show that agencies face a more complex reality: progress depends less on technology breakthroughs than on how leaders align people, data, governance, and strategy around real mission outcomes.

AI adoption is an organizational challenge, not a technical one

Federal leaders consistently point to people and operating model constraints as primary barriers to AI progress. Seventy percent of respondents said they need to upskill staff or hire new talent to implement AI effectively—underscoring that AI success hinges on workforce readiness, not just tools.

Strategic alignment is another pressure point. Nearly a third of leaders (31%) cited the absence of a clear AI vision and roadmap, while an equal share pointed to cost concerns and uncertain return on investment. Without a shared definition of success, agencies struggle to justify funding, prioritize use cases, or sustain momentum.

Data quality and governance shape what’s possible

Data remains a foundational constraint. Thirty‑two percent of respondents identified poor data quality and inconsistency as their biggest blockers. AI systems amplify the strengths and weaknesses of the data behind them; fragmented or unreliable data can limit value before implementation begins.

Leaders are also acutely aware of the governance responsibilities that accompany AI use. Survey responses emphasize the importance of privacy protections, ethical use, and transparency. As federal guidance and frameworks continue to evolve, agencies need AI systems that support accountability and adaptability—not brittle solutions that lock in risk.

Waiting increases risk

Despite these challenges, the survey reveals a clear consensus: delaying action carries its own cost. Agencies that wait for perfect conditions risk falling further behind, losing workforce confidence, and missing opportunities to build institutional learning.

The most effective leaders are starting with intention—selecting early use cases where mission value is tangible and risk is manageable. These efforts create feedback loops that strengthen data practices, clarify governance needs, and build internal confidence.

How federal leaders can start strong

Survey insights point to several practices that help agencies move from exploration to execution:

- Focus on impact, not perfection. Target problems where AI can deliver measurable improvements, such as accelerating reviews, reducing manual errors, or surfacing insights faster.

- Invest in people. Equip teams to interpret AI outputs, improve data quality, and manage workflows. Adoption depends on trust and understanding.

- Use AI pragmatically. Generative AI can augment knowledge work using existing data, enabling early value without extensive reengineering.

- Design for transparency. Prioritize systems that allow leaders to explain how outputs were generated and how decisions were supported.

- Balance agility with accountability. Governance should evolve with capability—protecting integrity without slowing progress.

- Define success early. Avoid pilot purgatory by aligning stakeholders around clear, mission‑aligned outcomes.

When these elements advance together, agencies are better positioned to scale AI responsibly and deliver durable mission impact.

From insight to execution: ICF Fathom

To help federal agencies move from experimentation to results, we developed ICF Fathom, a suite of mission‑ready AI solutions and services designed for federal environments.

ICF Fathom brings together customizable AI agents, prebuilt accelerators, and federal domain expertise to help agencies identify high‑value use cases, deploy solutions efficiently, and scale what works—without overengineering upfront.

Success with AI is not about doing everything at once. It is about proving value early, learning quickly, and scaling with confidence.

Download the full report to explore what federal leaders say is holding AI back—and what helps move it forward.